As such, it should accept three Tensors ( signal, kernel, and optionally bias) and the padding to apply to the input. It should mimic the functionality of torch.nn.nvNd and leverage FFTs behind the curtain without any additional work from the user. Now, I’ll demonstrate how to implement a Fourier convolution function in PyTorch. But there are plenty of real-world use cases with large kernel sizes, where Fourier convolutions are more efficient. The algorithm of the discrete convolution and fast Fourier Transform, named the DC-FFT algorithm includes two routes of problem solving: DC-FFT/Influence. In machine learning applications, it’s more common to use small kernel sizes, so deep learning libraries like PyTorch and Tensorflow only provide implementations of direct convolutions. In those cases, we can use the Convolution Theorem to compute convolutions in frequency space, and then perform the inverse Fourier transform to get back to position space.ĭirect convolutions are still faster when the inputs are small (e.g.

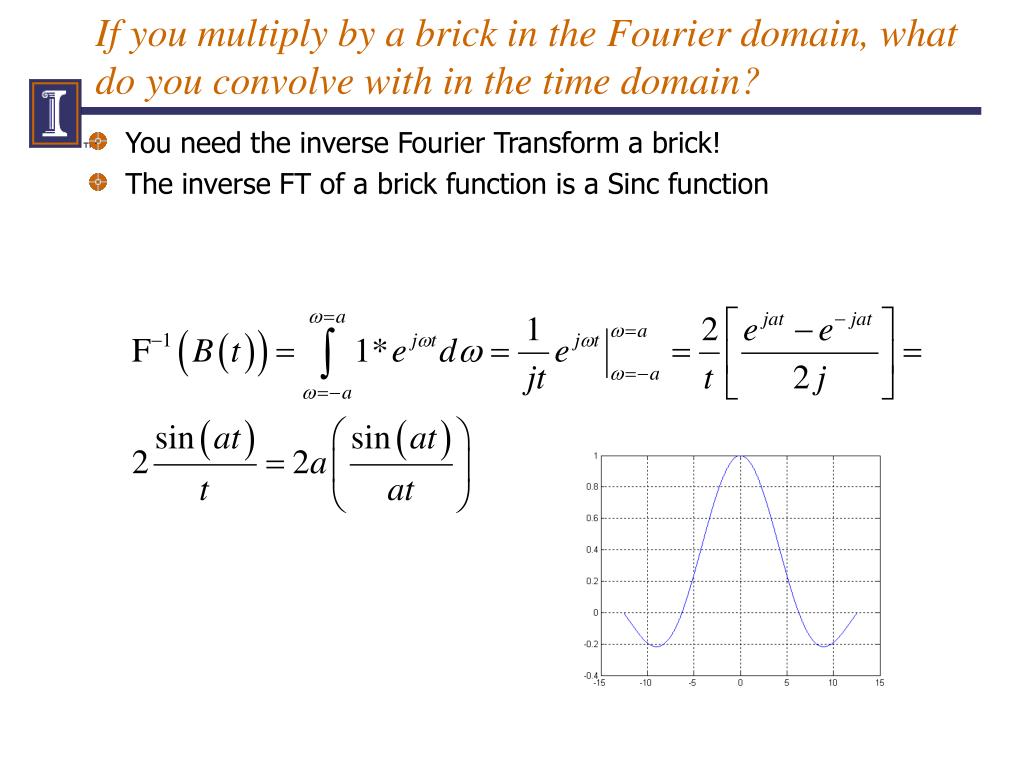

They are much faster than convolutions when the input arrays are large. Fast Fourier transforms can be computed in O(n log n) time. Direct convolutions have complexity O(n²), because we pass over every element in g for each element in f. In other words, convolution in one domain (e.g., time domain) corresponds. Why should we care about all of this? Because the fast Fourier transform has a lower algorithmic complexity than convolution. The Fourier transform of a convolution is the pointwise product of Fourier transforms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed